Download weka for Mac - Use machine learning algorithms to visualize and process data in order to solve real-world data mining tasks via an user-friendly GUI.

Last Updated on August 22, 2019

Weka is the perfect platform for learning machine learning. It provides a graphical user interface for exploring and experimenting with machine learning algorithms on datasets, without you having to worry about the mathematics or the programming.

- Download Weka (32 bit) for Windows to use machine learning algorithms for data mining tasks. Avast Free Mac Security.

- Ian Witten shows how to install the Weka data mining workbench on your own computer, and how to set things up to make it convenient to use. Installing Weka - Data Mining with Weka Skip main navigation.

- We have identified the issue with the WEKA 3.8.3 and WEKA 3.9.3 applications for the Mac that, for many users, caused them to fail to start up via double-clicking. On December 13, we replaced weka-3-8-3-oracle-jvm.dmg and weka-3-9-3-oracle-jvm.dmg on SourceForge with a new.dmg compatible with older versions of OS X, based on the HFS+ file system.

- Download Docs Courses Book. Getting Started. Video from Josh Gordon, Developer Advocate for @GoogleAI. Machine Learning without Programming. Weka can be used to build machine learning pipelines, train classifiers, and run evaluations without having.

In a previous post we looked at how to design and run an experiment with 3 algorithms on a dataset and how to analyse and report the results.

Manhattan Skyline, because we are going to be looking at using Manhattan distance with the k-nearest neighbours algorithm.

Photo by Tim Pearce, Los Gatos, some rights reserved.

Photo by Tim Pearce, Los Gatos, some rights reserved.

In this post you will discover how to use Weka Experimenter to improve your results and get the most out of a machine learning algorithm. If you follow along the step-by-step instructions, you will design and run your an algorithm tuning machine learning experiment in under five minutes.

Kick-start your project with my new book Machine Learning Mastery With Weka, including step-by-step tutorials and clear screenshots for all examples.

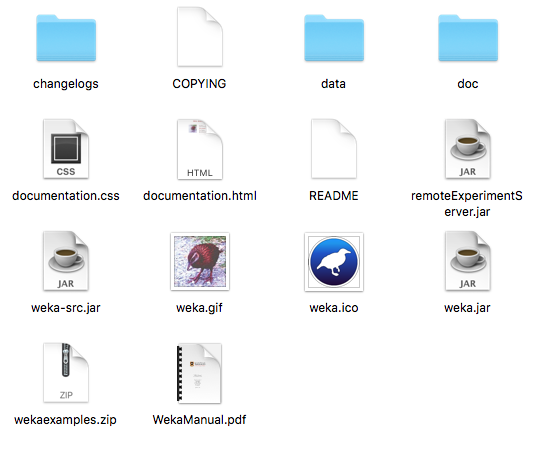

1. Download Weka and Install

Visit the Weka Download page and locate a version of Weka suitable for your computer (Windows, Mac or Linux).

Weka requires Java. You may already have Java installed and if not, there are versions of Weka listed on the download page (for Windows) that include Java and will install it for you. I’m on a Mac myself, and like everything else on Mac, Weka just works out of the box.

If you are interested in machine learning, then I know you can figure out how to download and install software into your own computer.

2. Start Weka

Start Weka. This may involve finding it in program launcher or double clicking on the weka.jar file. This will start the Weka GUI Chooser.

The Weka GUI Chooser lets you choose one of the Explorer, Experimenter, KnowledgeExplorer and the Simple CLI (command line interface).

Click the “Experimenter” button to launch the Weka Experimenter.

The Weka Experimenter allows you to design your own experiments of running algorithms on datasets, run the experiments and analyze the results. It’s a powerful tool.

Need more help with Weka for Machine Learning?

Take my free 14-day email course and discover how to use the platform step-by-step.

Click to sign-up and also get a free PDF Ebook version of the course.

3. Design Experiment

Click the “New” button to create a new experiment configuration.

Test Options

The experimenter configures the test options for you with sensible defaults. The experiment is configured to use Cross Validation with 10 folds. It is a “Classification” type problem and each algorithm + dataset combination is run 10 times (iteration control).

Ionosphere Dataset

Let’s start out by selecting the dataset.

- In the “Datasets” select click the “Add new…” button.

- Open the “data“directory and choose the “ionosphere.arff” dataset.

Weka 3.8 Download For Mac

The Ionosphere Dataset is a classic machine learning dataset. The problem is to predict the presence (or not) of free electron structure in the ionosphere given radar signals. It is comprised of 16 pairs of real-valued radar signals (34 attributes) and a single class attribute with two values: good and bad radar returns.

You can read more about this problem on the UCI Machine Learning Repository page for the Ionosphere dataset.

Tuning k-Nearest Neighbour

In this experiment we are interested in tuning the k-nearest neighbor algorithm (kNN) on the dataset. In Weka this algorithm is called IBk (Instance Based Learner).

The IBk algorithm does not build a model, instead it generates a prediction for a test instance just-in-time. The IBk algorithm uses a distance measure to locate k “close” instances in the training data for each test instance and uses those selected instances to make a prediction.

In this experiment, we are interested to locate which distance measure to use in the IBk algorithm on the Ionosphere dataset. We will add 3 versions of this algorithm to our experiment:

Euclidean Distance

- Click “Add new…” in the “Algorithms” section.

- Click the “Choose” button.

- Click “IBk” under the “lazy” selection.

- Click the “OK” button on the “IBk” configuration.

This will add the IBk algorithm with Euclidean distance, the default distance measure.

Manhattan Distance

- Click “Add new…” in the “Algorithms” section.

- Click the “Choose” button.

- Click “IBk” under the “lazy” selection.

- Click on the name of the “nearestNeighborSearchAlgorithm” in the configuration for IBk.

- Click the “Choose” button for the “distanceFunction” and select “ManhattanDistance“.

- Click the “OK” button on the “nearestNeighborSearchAlgorithm” configuration.

- Click the “OK” button on the “IBk” configuration.

Select a distance measures for IBk

This will add the IBk algorithm with Manhattan Distance, also known as city block distance.

Chebyshev Distance

- Click “Add new…” in the “Algorithms” section.

- Click the “Choose” button.

- Click “IBk” under the “lazy” selection.

- Click on the name of the “nearestNeighborSearchAlgorithm” in the configuration for IBk.

- Click the “Choose” button for the “distanceFunction” and select “ChebyshevDistance“.

- Click the “OK” button on the “nearestNeighborSearchAlgorithm” configuration.

- Click the “OK” button on the “IBk” configuration.

This will add the IBk algorithm with Chebyshev Distance, also known as city chessboard distance.

4. Run Experiment

Click the “Run” tab at the top of the screen.

This tab is the control panel for running the currently configured experiment.

Click the big “Start” button to start the experiment and watch the “Log” and “Status” sections to keep an eye on how it is doing.

5. Review Results

Click the “Analyse” tab at the top of the screen.

This will open up the experiment results analysis panel.

Algorithm Rank

The first thing we want to know is which algorithm was the best. We can do that by ranking the algorithms by the number of times a given algorithm beat the other algorithms.

- Click the “Select” button for the “Test base” and choose “Ranking“.

- Now Click the “Perform test” button.

The ranking table shows the number of statistically significant wins each algorithm has had against all other algorithms on the dataset. A win, means an accuracy that is better than the accuracy of another algorithm and that the difference was statistically significant.

Algorithm ranking in the Weka explorer for the Ionosphere dataset

We can see the Manhattan Distance variation is ranked at the top and that the Euclidean Distance variation is ranked down the bottom. This is encouraging, it looks like we have found a configuration that is better than the algorithm default for this problem.

Algorithm Accuracy

Next we want to know what scores the algorithms achieved.

- Click the “Select” button for the “Test base” and choose the “IBk” algorithm with “Manhattan Distance” in the list and click the “Select” button.

- Click the check-box next to “Show std. deviations“.

- Now click the “Perform test” button.

In the “Test output” we can see a table with the results for 3 variations of the IBk algorithm. Each algorithm was run 10 times on the dataset and the accuracy reported is the mean and the standard deviation in rackets of those 10 runs.

Table of algorithm classification accuracy on the Ionosphere dataset in the Weka Explorer

We can see that IBk with Manhattan Distance achieved an accuracy of 90.74% (+/- 4.57%) which was better than the default of Euclidean Distance that had an accuracy of 87.10% (+/- 5.12%).

The little *” next to the result for IBk with Euclidean Distance tells us that the accuracy results for the Manhattan Distance and Euclidean Distance variations of IBk were drawn from different populations, that the difference in the results is statistically significant.

We can also see that there is no “*” for the results of IBk with Chebyshev Distance indicating that the difference in the results between the Manhattan Distance and Chebyshev Distance variations of IBk was not statistically significant.

Summary

In this post you discovered how to configure a machine learning experiment with one dataset and three variations of an algorithm in Weka. You discovered how you can use the Weka experimenter to tune the parameters of machine learning algorithm on a dataset and analyze the results.

If you made it this far, why not:

- See if you can further tune IBk and get a better result (and leave a comment to tell us)

- Design and run an experiment to tune the k parameter of IBk.

Discover Machine Learning Without The Code!

Develop Your Own Models in Minutes

...with just a few a few clicks

Discover how in my new Ebook:

Machine Learning Mastery With Weka

Machine Learning Mastery With Weka

Covers self-study tutorials and end-to-end projects like:

Loading data, visualization, build models, tuning, and much more...

Loading data, visualization, build models, tuning, and much more...

Weka Download For Windows 10

Finally Bring The Machine Learning To Your Own Projects

Skip the Academics. Just Results.

Download Weka For Mac Os

WEKA Instructions

Overview

WEKA is a data mining suite that is open source and is availablefree of charge. If you want to be able to changethe source code for the algorithms, WEKA is a good tool to use. It alsoreimplements many classic data mining algorithms, including C4.5 which iscalled J48 in WEKA. For more information, check out theWEKA web page.Downloading and Invoking WEKA

You may run WEKA on the department's lab machines. However, it is probably moreconvenient to run it on your laptop for the assignments and project, so youmay want to download it to you personal machine. You can download the latest version of WEKA to your laptop (or linux machine)via theWEKA web page. Iffor some reason that link is broken, just type in 'WEKA' to Googleand the WEKA home page should be listed first in the results.You should download the latest stable version of WEKA. As of Fall 2020 that version is 3.8.4.Once it is downloaded, you just double click on the program to launch it(if it does not create an icon automatically, go to the list of programsand start it that way. You will want to run it with the console.Mac Users sometimes have issues installing Weka. A helpful student emailedme with some helpful advice for Mac users. If you are getting errors when you try toinstall Weka, then go to 'System Preferences > Security & Privacy', and when thewindow pops up, go to the “Allow apps downloads from” area and then select“App store and identified developers”. This should provide the necessary access. For more information, you can consultthis article. Once you have installed Weka you may want to restore the original values.

WEKA Documentation and Tutorial

There is documentation for WEKA available from the WEKA web page.If you click on 'documentation' you will find many useful resources, includinga WEKA manual. If you scroll down, you will find direct links to the latest WEKA manual. As of 2-11-2018 that is version 3.8.2.The tutorial that I will use in class is avialable below (wewill only cover the Explorer mode):Note: The tutorial is now slightly out of synch with the version of Weka. For example, in the tutorial the term 'Neural network' is used but in WEKAit is now called 'Multilayer Perceptron'. Also, the results in the tutorialfor J48 on the iris data is without the discretization step (so if you followthe tutorial and discretize the variables, undo it before going on. Also, theresults are with the 'binary-split' flag set to true even though this apparentlyis not set in the tutorial. We will not be going over the 'User Classifier', sowhen you get to it you can skip to the Clustering section of the tutorial.

Inputting Data Into WEKA

You will need to input your dataset into WEKA. You start the process byclicking on 'Open Url' while in the 'Preprocess' tab of the Explorer (this istab that you are initially in when WEKA starts). You can then browse to thelocation of the data file on your PC (you can use 'Open URL' if the datasetis on the web). WEKA ideally would like an .arff file, which contains aheader that describes the variables and the data types of the variables,followed by the data itself. The format of the .arff file is availablefrom the various WEKA manuals.There are some sample data sets that come with WEKA that you can access and playwith. These are all in a data directory where the program is installed (onPCs, under 'program files' and on the LC machines they should be under/usr/local/share/weka-3.6.4/data). Thus, when you install them to your PC, youmight find them under C:/Program Files/Weka-3-6/data. But just in case youcannot find them, I have alocalcopy of the sample Weka databases. If you want to enter the url(using the 'Open URL' option), it is:http://storm.cis.fordham.edu/~gweiss/data-mining/weka-data/. Then simply add the filename at the end of this string (e.g., 'iris.arff').

Very often you will want to use a dataset that does not have an .arff file.I think the best method may just be to manually create the arff file althoughyou can try to inmport a csv file.

Creating a .arff file

You should familarize yourself with the format of a .arff file before youtry to create the .arff file. The format is fairly simple. You start with@relation, then have a bunch of @attribute statements, and then have a @datacommand, followed by the data, one record per line. Very often you will havea file with the data only. This could be a regular .csv file, or it could bea .data file associated with a C4.5 data set. Note that C4.5 uses .data filesfor the data and .names files to explain the format of the data. The .namesfile contains essentially the same info that goes into the top of the .arfffile, although in a different format.Weka Download For Windows

Some things to watch out for. As I found out, the .arff file should not haveany blank lines. A blank line after the @relation command (before the@attribute commands) causes an error! This may not be apparent from thedocumentation on the .arff file. I suggest that beyond just looking at the.arff file documentation, you look on the web for an actual .arff file.

You will need to edit the file with some type of text editor. Wordpad worksfine. Some text editors may not preserve the line breaks and will cause aproblem. If you are familar with linux, you could always edit it on linuxmachine with vi or emacs (but that is not necessary). On windows it may beeasiest to name the file with a .txt extention, since that makes it easierto open with a text editor like wordpad. But eventually you will need torename it to a .arff file extension. Renaming extensions in windows is notalways easy. Here is some info on how to do that:

You need to make the file extensions visible and editable, which it probablywill not be by default. The exact method depends on the version of Windows.In my current version I go to the Windows explorer window and then select the'tools' menu option, then 'Folder Options', then 'view', and then deselect thecheckbox for 'hide extensions for known file types.' When you do not hide theextensions, you can edit them easily and thus change them from .txt to .arffand back again.An alternative would be to copy it to a linux machine (maybe with Ftp) and then change the name and copy it back. But while this may seem like overkill, modifying the window file options so you can edit the extension can also betricky.

Now, in many cases you will want to create an .arff file from a .data and.names file, since many datasets follow the C4.5 format. You can copy the .datafile to a .txt extension. Thus, if you are working with adult.data, rename itto adult.txt (or figure out how to open adult.data with wordpad by using the'open with' option). Then copy the part of the .names file that names anddescribes the variables/features. Put that at the top of the .txt file. But now you have to essentially convert from the format for the .names fileto the format for the .arff header. The format are actually fairly similar. One difference is that the .names uses 'continuous' whereas the .arff uses'NUMERIC'. Also, the discrete features that specify the set of possible valuesdo not use curly brackets for the .names format but do for the .arff format. One should be able to convert about a dozen features from one format to anotherin a few minutes. However, there is one key difference. The .names file willlist the class variable first, even though it shows up in the last element inthe data record. The .arff file uses the more natural convention where theclass variable would show up in the corresponding position-- which in this casemeans it would be at the end. Thus, you need to move it from the first to the last position when converting from .names to .arff.

Be careful in the conversion process because the error messages are notvery helpful.

If you want to use the .csv importer, then after you have hit the'Browse URL' button in WEKA Explorer andhave browsed to the correct directory, set the value in the 'Files of type'drop down list to choose '.csv data files.' Then click open. WEKA shouldsucessfully read in the data.